In this article we will look at what are the minimum requirements if you want to implement private DNS zone infrastructure and private link in a test setup to be able to use PaaS services with private endpoints. In this example we will use blob storage but it can be extended to most other PaaS services that support private endpoints.

The concept of Azure Private Link is a bit complicated and people often get confused around how it works. This article aims to break it down into its simplest setup.

The following components are required:

- A VNet and a subnet

- A default NSG (that should be associated with the subnet)

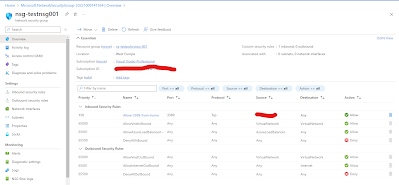

- An inbound rule on the NSG that allows port 3389 from your external IP

- A private DNS zone (privatelink.blob.core.windows.net)

- A VNet link between the private DNS zone and the VNet (it's a configuration on the private DNS zone)

- A test VM running in the subnet (we need a source with a local IP to test connectivity towards the storage account with the private endpoint)

- A public IP associated with the VM

- AzCopy installed on the test VM

- A storage account with a private endpoint (including an A record in the private DNS zone)

Once it is all set up, we will disable all public access on the storage account and verify that the VM is connecting to the storage account on its private IP address. Following this we will try to copy a file from the VM to the storage account and the other way around as well (

using AzCopy) to prove that it works.

Steps

First deploy a VNet and one subnet, see example below. There are no specific requirements to this part.

Then create a default NSG and associate it with the subnet (this is not strictly required, but it is good practice and will come in handy later):

Create a private DNS zone named: privatelink.blob.core.windows.net

Create a VNet link between the private DNS zone and the VNet. Go to the private DNS zone -> privatelink.blob.core.windows.net -> Virtual Network Links -> click Add

Give the link a name and choose the VNet you just deployed. Don't check the box around auto registration, this is for VMs and not in scope for this test.

Deploy a virtual machine with a public IP (so that you can RDP to it). You can do that via the portal or you can use a test Windows Server 2022 VM ARM template, see

more info here (note that this VM does not have a public IP so it will have to be created separately and associated with the NIC post deployment).

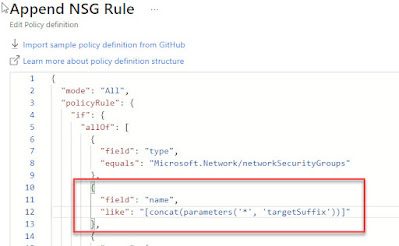

Add a rule to the NSG that allows traffic on port 3389 tcp from your

external IP address. This is so that you can RDP to the test VM:

RDP to the test VM and

download AzCopy v10 and verify that it can run with the command: .\azcopy (this will show the version and some help info).

Create a storage account and under Networking -> Firewalls and virtual networks -> Choose the "Enabled from selected virtual networks and IP addresses" and add your external IP address. We will change this later to 'Disabled' but for now we need it to be able to create a blob container and add a file from the Azure portal. In addition this will let us browse the content of the blob containers.

Still under Networking, choose the Private Endpoint connections tab and add a private endpoint.

Give the private endpoint a name e.g. pe-<name of storage account> and choose the VNet and subnet that was created earlier. When it asks around integration with a private DNS zone, choose "No". If you choose yes it will create a new Private DNS zone but we have already created a zone in a previous step.

What adding a private endpoint does is that it creates a vNIC and attaches a local IP address from the subnet via DHCP and then it associates that vNIC with the storage account so that it can be accessed internally.

Once the private endpoint is created it can be seen in the private endpoint connections tab, see above. We need to take a note of assigned local IP address. To do this, click on the private endpoint link and then going to DNS Configuration, see below:

Now we need to create an A record in the private DNS zone we created earlier that points to the IP address we just noted. Go to Private DNS Zones -> privatelink.blob.core.windows.net -> Click "+ Record set". Under name, add the storage account name and under IP address, add the IP address that was recorded in the previous step, see below.

Now we will verify that from the test VM can resolve the local IP of the storage account by using the regular storage account FQDN (if this doesn't work it will resolve with a public IP address and you will know that something went wrong).

Jump to the test VM and start a command prompt:

Run: nslookup <storageaccountname>.blob.core.windows.net

The result should be the local IP, see below (the red box in the screenshot shows the result without a private endpoint and the green box shows the result after the private endpoint has been added. In the green box, the local IP 10.100.0.8 correctly shows):

To verify that we can copy a file from the test VM to a blob container and vice versa, first we will create a dummy file on the VM and then a dummy file in the storage account (any txt file will do).

Go to the storage account and create a new container (in this example I call it webcontent, any name will do):

Then go into the container and click Upload to a file (in this example it's called getmefile.txt):

For the VM to be able to access files in the storage account, we need a SAS token which will be used with the AzCopy command. Go to the storage account -> Shared access signature -> check Blob and check all three resource types (basically just allow all if you're in doubt as this is only for testing) -> click Generate SAS and connection string.

Then copy or take a note of the 'Blob service SAS URL' value at the bottom of the page:

Then jump back to the test VM.

I created a dummy file called index.html and placed in C:\users\localadmin folder. I also have AzCopy located in the same folder.

To test that we can copy a file from the VM to the storage account, start a PowerShell window (will also work from a regular command prompt) and run the following command:

.\azcopy copy ".\index.html" "https://<storage account name>.blob.core.windows.net/webcontent/?sv=2022-11-02&ss<link has been shortened>U%3D"

So you use the copy function and then choose a source and destination. For the source we choose the index.html file in the current folder. For the destination, we use the Blob service SAS URL but we modify it by adding the blob folder name and a '/' at the end of the FQDN and before the SAS token info (marked above in bold), also see below:

For the next test, we copy the content of the blob container we created earlier including the file we added and place it on the local test VM:

It is basically just changing the source and destination in the AzCopy command (again adding the blob container name after the FQDN):

.\azcopy copy "https://<storage account name>.blob.core.windows.net/webcontent/?sv=2022-11-<link has been shortened>2BU%3D" "C:\Users\localadmin\" --recursive

To ensure that public access is entirely disabled, you can now go to the storage account under Networking -> Firewalls and virtual networks -> Choose Disabled and Save.

With this change you can no longer browse content in the blob containers via the portal.

However, you can re-run the two AzCopy commands above and it will still work.

If you want to verify that the files are available in the blob container, you can access them via the browser (from the test VM) by using the 'Blob service SAS URL' and then modifying it by appending the container name and file name after the FQDN:

https://<storage account name>.blob.core.windows.net/webcontent/getmefile.txt?sv=2022-11-02&s<link has been shortened>2BU%3D

Another way to represent the same URL is:

https://<storage account name>.blob.core.windows.net/<blob container>/<file name><SAS Token>

The reason you cannot just use the FQDN + the folder and file name is that even if you can technically access the content of the storage account i.e. there is no firewall on the storage account blocking access, you still need to present the required credentials to view the content of the storage account which in this case is the SAS token that is added to the URL.

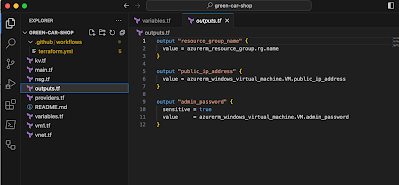

If you want to get just the SAS token and not the full Blob service SAS URL, it is available under the storage account -> 'Shared access signature' in the same location as the Blob service SAS URL, see below:

With this we have a proven setup where traffic between a VM and a storage account only runs over the Private Link and all traffic is handled via the internal network using only local IP addresses.